This article was first published as part of the MDENet newsletter. MDENet is the expert network for model-driven engineering, you can find out more at www.mde-network.org.

In 2010, David Garlan wrote that “the reality of today’s software systems requires us to consider uncertainty as a first-class concern”. Eleven years later, the only thing that has changed is that we now live in an even more complex and uncertain world. Uncertainty permeates the systems we build, the requirements for which we create them, the processes by which we develop them, the infrastructure on which we base them, and the conditions under which we operate them. Uncertainty has many meanings and takes many forms. It can arise by lack of knowledge (“epistemic”) or due to randomness (“aleatory”). Sometimes new information can help resolve it; other times it can be inherently irreducible. Usually, we think about uncertainty in terms of the context in which our systems need to operate; but we must often deal with uncertainty internal to the workings of our systems. In fact, in 2020, Troya et al. catalogued six types of uncertainty studied by the software modelling research community in more than 120 papers in the last 20 years.

Walker et al. suggested thinking about uncertainty in terms of a spectrum from complete certainty to total ignorance. Towards the “certainty” end of the spectrum, we have good enough models within well-understood error margins. As we move away from certainty, we have models that vary with known probability distributions. Less certain models cannot rely on such distributions and make do with the relative likelihood of possible scenarios. Even more uncertainty means we cannot even rank these alternative scenarios – but at least we know what the possibilities are. One more step away from certainty, and we are as wise as Socrates in our recognized ignorance. And on the other end of the spectrum we have total ignorance, Rumsfeld’s “unknown unknowns”.

Uncertainty concepts are found in many formalisms and modelling languages. Sometimes modelling uncertainty is as simple as documenting the systematic error of the output of a function or as classifying a bug as “unassigned” on a ticketing system until we figure out who should take on fixing it. People also specifically model uncertainties with purpose-specific models, ranging from simple Markov chains to the sophisticated standardization proposal considered at the Object Management Group. Facing a wide range of flavours of uncertainty, we thus have an equally wide range of modelling approaches. How easy is it to navigate their combinations and interdependencies?

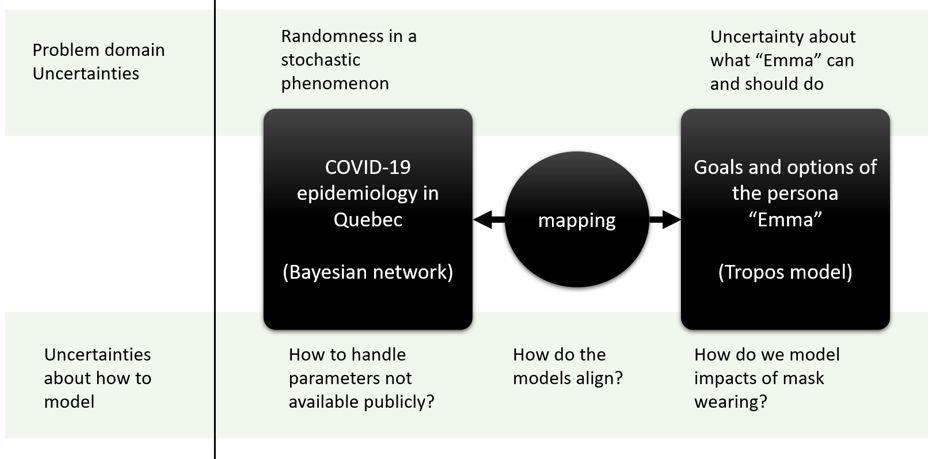

My collaborators and I recently performed a case study to better understand this. We played the role of developers building an app to help people stay safe during the COVID-19 pandemic. As part of early requirements engineering, we created a persona called Emma, that lives in Quebec and wants to use the app to decide how to get dinner. Emma has some dinner options (cooking in, getting take-out) and wants to protect herself and her local community. We modelled Emma’s options using Tropos, a language for modelling goals and requirements. We also modelled a publicly available epidemiological model of COVID-19 in Quebec as a Bayesian network. We then connected these models (see Figure), to show how Emma could connect her personal decisions with their social impacts.

The Quebec epidemiological model and Emma’s goal model are both about uncertainty. The former captures the inherent randomness of a stochastic phenomenon; the latter expresses the different scenarios available to Emma. While modelling, we also documented our own uncertainty as modellers, i.e., about how to build the models. We worried about things such as “how should the two models be connected?”, “how should we model this decision?”, “what should we do with missing values?” etc. Bayesian networks and goal models were good for capturing the uncertain elements of the problem domain. However, capturing the uncertainty of the modelling process itself required a different set of concepts. We captured these uncertainties with DRUIDE, a specialized modelling language which allowed us to decouple modelling uncertainty about the design of the models from uncertainty internal to the problem. The experience made it clear that the different types of uncertainty required very different modelling approaches and treatments.

I have been working for over 10 years on this kind of “design uncertainty”. My goal is to mine it from the development context (e.g., developer conversations) and/or to capture it in (semi-) formal representations. I then use these to perform automated reasoning that preserves uncertainty, with the goal to provide feedback to modellers to assist them in building better models. Key to this vision is a more general idea: that understanding the different meanings of uncertainty is necessary if we are to create modelling infrastructure that lets practitioners reason about many kinds of uncertainty at once, depending on how they are modelled, how they interact, and how they affect each other. Achieving this deeper understanding can only happen in a community like MDENet, that allows researchers and practitioners of many different backgrounds and expertise specialties to freely exchange their unique perspectives.

Comments